How do you know what to automate first on your network? Here are three steps to put you on the right path.

Read More at Enable Sysadmin

3 steps to identifying Linux system automation candidates

Technical Evaluations: 6 questions to ask yourself

Use these six questions to determine whether a solution actually solves the business problem you’re addressing.

Read More at Enable Sysadmin

Paving the path to organizational goals: Consider the bridge not built

Paving the path to organizational goals: Consider the bridge not built

Defining and understanding value in your organization

Jonathan Roemer

Wed, 4/7/2021 at 9:26pm

Image

Image by esudroff from Pixabay

Thomas Sowell opines in Basic Economics that “ the real cost of anything is still its value in alternative uses. The real cost of building a bridge is whatever else could have been built with that same labor and material. The cost of watching a television sitcom or soap opera is the value of the other things that could have been done with that same time.”

[ You might also enjoy: 5 ways to ruin a sysadmin’s day ]

Topics:

Linux

Career

Read More at Enable Sysadmin

6 advanced tcpdump formatting options

The final article in this three-part tcpdump series covers six more tcpdump packet capturing trick options.

Read More at Enable Sysadmin

6 options for tcpdump you need to know

Six more tcpdump command options to simplify and filter your packet captures.

Read More at Enable Sysadmin

6 tcpdump network traffic filter options

6 tcpdump network traffic filter options

The first six of eighteen common tcpdump options that you should use for network troubleshooting and analysis.

Kedar Vijay Kulkarni

Tue, 4/6/2021 at 1:58pm

Image

Image by Pexels from Pixabay

The tcpdump utility is used to capture and analyze network traffic. Sysadmins can use it to view real-time traffic or save the output to a file and analyze it later. In this three-part article, I demonstrate several common options you might want to use in your day-to-day operations with tcpdump.

Topics:

Linux

Linux Administration

Command line utilities

Read More at Enable Sysadmin

Exploring PKI weaknesses and how to combat them

Public key infrastructure has some known weaknesses. See how Certificate Authority Authorization and Certificate Transparency help strengthen certificate infrastructures.

Read More at Enable Sysadmin

Scaling Microservices on Kubernetes

By Ashley Davis

*This article was originally published at TheNewStack

Applications built on microservices can be scaled in multiple ways. We can scale them to support development by larger development teams and we can also scale them up for better performance. Our application can then have a higher capacity and can handle a larger workload.

Using microservices gives us granular control over the performance of our application. We can easily measure the performance of our microservices to find the ones that are performing poorly, are overworked, or are overloaded at times of peak demand. Figure 1 shows how we might use the Kubernetes dashboard to understand CPU and memory usage for our microservices.

If we were using a monolith, however, we would have limited control over performance. We could vertically scale the monolith, but that’s basically it.

Horizontally scaling a monolith is much more difficult; and we simply can’t independently scale any of the “parts” of a monolith. This isn’t ideal, because it might only be a small part of the monolith that causes the performance problem. Yet, we would have to vertically scale the entire monolith to fix it. Vertically scaling a large monolith can be an expensive proposition.

Instead, with microservices, we have numerous options for scaling. For instance, we can independently fine-tune the performance of small parts of our system to eliminate bottlenecks and achieve the right mix of performance outcomes.

There are also many advanced ways we could tackle performance issues, but in this post, we’ll overview a handful of relatively simple techniques for scaling our microservices using Kubernetes:

- Vertically scaling the entire cluster

- Horizontally scaling the entire cluster

- Horizontally scaling individual microservices

- Elastically scaling the entire cluster

- Elastically scaling individual microservices

Scaling often requires risky configuration changes to our cluster. For this reason, you shouldn’t try to make any of these changes directly to a production cluster that your customers or staff are depending on.

Instead, I would suggest that you create a new cluster and use blue-green deployment, or a similar deployment strategy, to buffer your users from risky changes to your infrastructure.

Vertically Scaling the Cluster

As we grow our application, we might come to a point where our cluster generally doesn’t have enough compute, memory or storage to run our application. As we add new microservices (or replicate existing microservices for redundancy), we will eventually max out the nodes in our cluster. (We can monitor this through our cloud vendor or the Kubernetes dashboard.)

At this point, we must increase the total amount of resources available to our cluster. When scaling microservices on a Kubernetes cluster, we can just as easily make use of either vertical or horizontal scaling. Figure 2 shows what vertical scaling looks like for Kubernetes.

We scale up our cluster by increasing the size of the virtual machines (VMs) in the node pool. In this example, we increased the size of three small-sized VMs so that we now have three large-sized VMs. We haven’t changed the number of VMs; we’ve just increased their size — scaling our VMs vertically.

Listing 1 is an extract from Terraform code that provisions a cluster on Azure; we change the vm_size field from Standard_B2ms to Standard_B4ms. This upgrades the size of each VM in our Kubernetes node pool. Instead of two CPUs, we now have four (one for each VM). As part of this change, memory and hard-drive for the VM also increase. If you are deploying to AWS or GCP, you can use this technique to vertically scale, but those cloud platforms offer different options for varying VM sizes.

We still only have a single VM in our cluster, but we have increased our VM’s size. In this example, scaling our cluster is as simple as a code change. This is the power of infrastructure-as-code, the technique where we store our infrastructure configuration as code and make changes to our infrastructure by committing code changes that trigger our continuous delivery (CD) pipeline

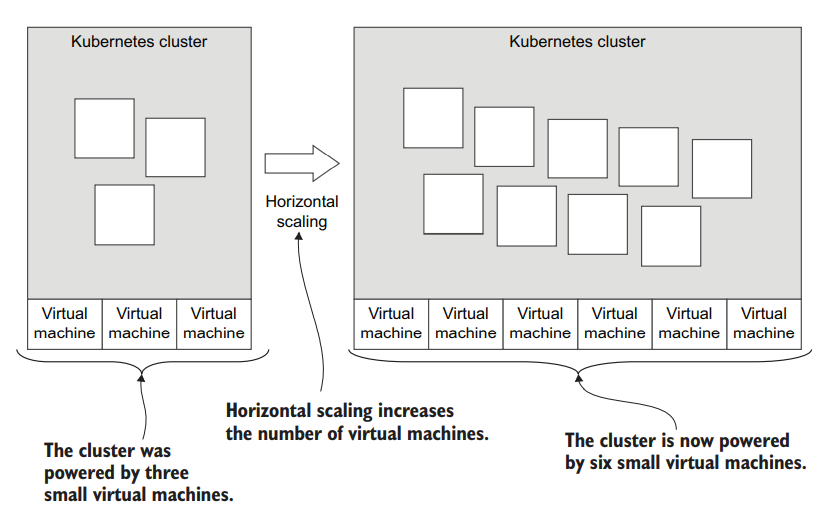

Horizontally Scaling the Cluster

In addition to vertically scaling our cluster, we can also scale it horizontally. Our VMs can remain the same size, but we simply add more VMs.

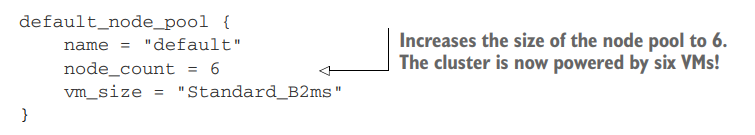

By adding more VMs to our cluster, we spread the load of our application across more computers. Figure 3 illustrates how we can take our cluster from three VMs up to six. The size of each VM remains the same, but we gain more computing power by having more VMs.

Listing 2 shows an extract of Terraform code to add more VMs to our node pool. Back in listing 1, we had node_count set to 1, but here we have changed it to 6. Note that we reverted the vm_size field to the smaller size of Standard_B2ms. In this example, we increase the number of VMs, but not their size; although there is nothing stopping us from increasing both the number and the size of our VMs.

Generally, though, we might prefer horizontal scaling because it is less expensive than vertical scaling. That’s because using many smaller VMs is cheaper than using fewer but bigger and higher-priced VMs.

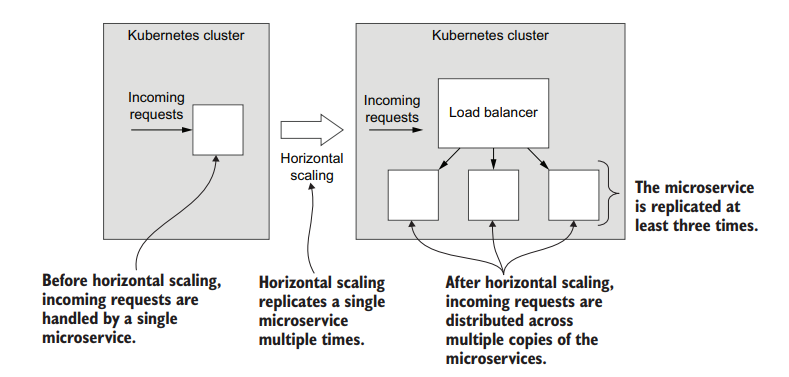

Horizontally Scaling an Individual Microservice

Assuming our cluster is scaled to an adequate size to host all the microservices with good performance, what do we do when individual microservices become overloaded? (This can be monitored in the Kubernetes dashboard.)

Whenever a microservice becomes a performance bottleneck, we can horizontally scale it to distribute its load over multiple instances. This is shown in figure 4.

We are effectively giving more compute, memory and storage to this particular microservice so that it can handle a bigger workload.

Again, we can use code to make this change. We can do this by setting the replicas field in the specification for our Kubernetes deployment or pod as shown in listing 3.

Not only can we scale individual microservices for performance, we can also horizontally scale our microservices for redundancy, creating a more fault-tolerant application. By having multiple instances, there are others available to pick up the load whenever any single instance fails. This allows the failed instance of a microservice to restart and begin working again.

Elastic Scaling for the Cluster

Moving into more advanced territory, we can now think about elastic scaling. This is a technique where we automatically and dynamically scale our cluster to meet varying levels of demand.

Whenever a demand is low, Kubernetes can automatically deallocate resources that aren’t needed. During high-demand periods, new resources are allocated to meet the increased workload. This generates substantial cost savings because, at any given moment, we only pay for the resources necessary to handle our application’s workload at that time.

We can use elastic scaling at the cluster level to automatically grow our clusters that are nearing their resource limits. Yet again, when using Terraform, this is just a code change. Listing 4 shows how we can enable the Kubernetes autoscaler and set the minimum and maximum size of our node pool.

Elastic scaling for the cluster works by default, but there are also many ways we can customize it. Search for “auto_scaler_profile” in the Terraform documentation to learn more.

Elastic Scaling for an Individual Microservice

We can also enable elastic scaling at the level of an individual microservice.

Listing 5 is a sample of Terraform code that gives microservices a “burstable” capability. The number of replicas for the microservice is expanded and contracted dynamically to meet the varying workload for the microservice (bursts of activity).

The scaling works by default, but can be customized to use other metrics. See the Terraform documentation to learn more. To learn more about pod auto-scaling in Kubernetes, see the Kubernetes docs.

About the Book: Bootstrapping Microservices

You can learn about building applications with microservices with Bootstrapping Microservices.

Bootstrapping Microservices is a practical and project-based guide to building applications with microservices. It will take you all the way from building one single microservice all the way up to running a microservices application in production on Kubernetes, ending up with an automated continuous delivery pipeline and using infrastructure-as-code to push updates into production.

Other Kubernetes Resources

This post is an extract from Bootstrapping Microservices and has been a short overview of the ways we can scale microservices when running them on Kubernetes.

We specify the configuration for our infrastructure using Terraform. Creating and updating our infrastructure through code in this way is known as intrastructure-as-code, as a technique that turns working with infrastructure into a coding task and paved the way for the DevOps revolution.

To learn more about Kubernetes, please see the Kubernetes documentation and the free Introduction to Kubernetes training course.

To learn more about working with Kubernetes using Terraform, please see the Terraform documentation.

The post Scaling Microservices on Kubernetes appeared first on Linux Foundation – Training.

Top Enable Sysadmin content of March 2021

Check out our top articles from a record-breaking month.

Read More at Enable Sysadmin

Linux Foundation Training Scholarships Are Back! Apply by April 30

Linux Foundation Training (LiFT) Scholarships are back! Since 2011, The Linux Foundation has awarded over 600 scholarships for more than a million dollars in training and certification to deserving individuals around the world who would otherwise be unable to afford it. This is part of our mission to grow the open source community by lowering the barrier to entry and making quality training options accessible to those who want them.

Applications are being accepted through April 30 in 10 different categories:

- Open Source Newbies

- Teens-in-Training

- Women in Open Source

- Software Developer Do-Gooder

- SysAdmin Super Star

- Blockchain Blockbuster

- Cloud Captain

- Linux Kernel Guru

- Networking Notable

- Web Development Wiz

Whether you are just starting in your open source career, or you are a veteran developer or sysadmin who is looking to gain new skills, if you feel you can benefit from training and/or certification but cannot afford it, you should apply.

Recipients will receive a Linux Foundation training course and certification exam. All our certification exams, and most training courses, are offered remotely, meaning they can be completed from anywhere.

Winners will be announced early summer.

The post Linux Foundation Training Scholarships Are Back! Apply by April 30 appeared first on Linux Foundation – Training.